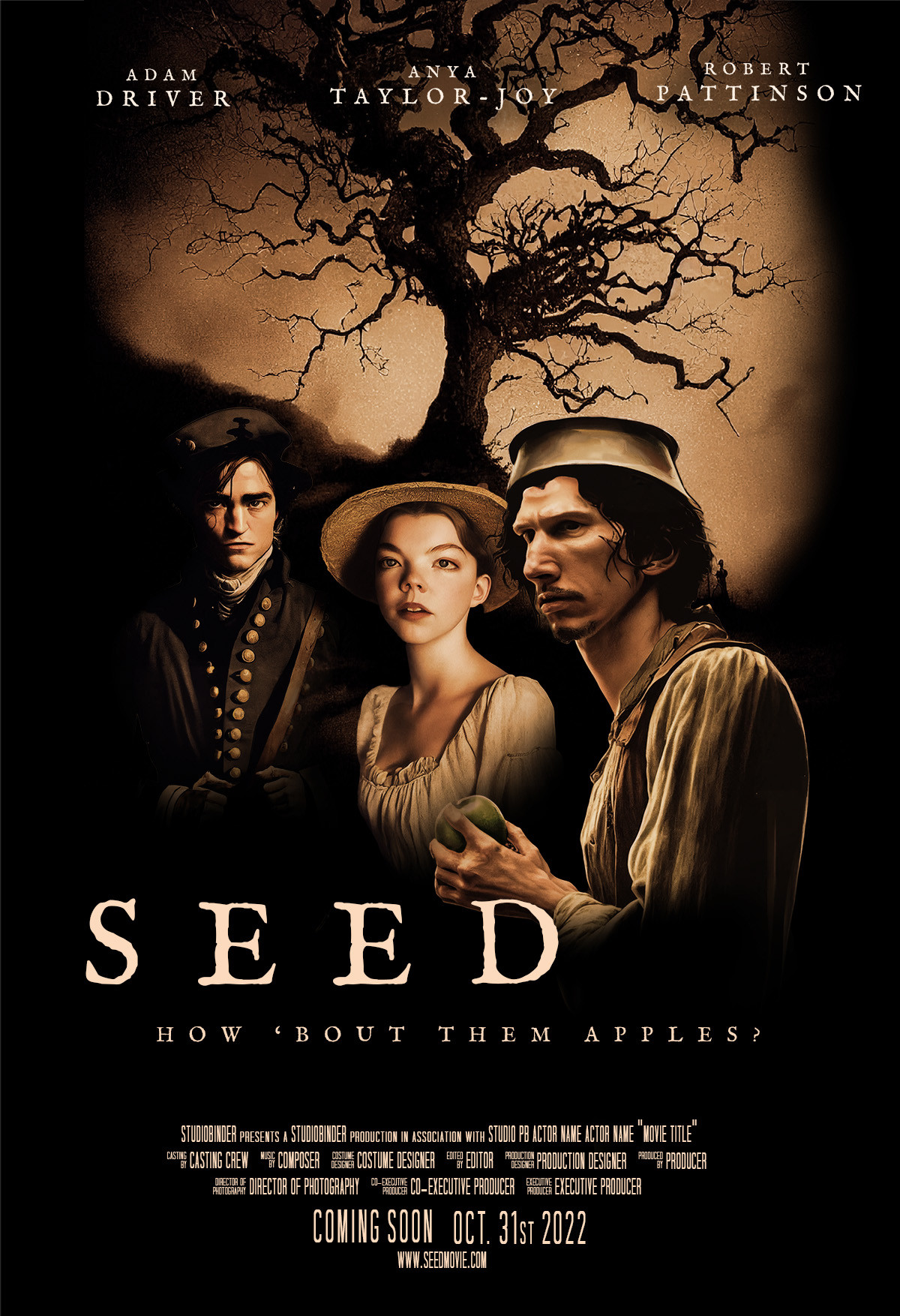

Spent a chunk of last weekend designing a poster for a non-existent movie using AI.

The concept was: "A southern gothic horror film about Johnny Appleseed."

Below is the result... all art assets created & edited with AIs (🧵):

With a general concept developed, it was time to generate the following assets with @midjourney_ai:

- A moody background image,

- Robert Pattinson as "the solider."

- Anya Taylor-Joy as "the solider's wife."

- Adam Driver as Johnny Appleseed

All AI prompts followed a similar pattern and shared keywords:

"A poster of <subject> <vibe/action>. southern gothic, creepy, cinematic, dramatic lighting. --ar 2:3"

It took around 15-30 prompts per asset to find the right image.

A few samples of background generations:

Adam Driver as Johnny Appleseed...

This one was tricky. Midjourney can't handle "pan on the head" or "holding an apple" very well, so generated a base character and then added the pan + apple using DALL-E 2 "outpainting."

Robert Pattinson as "the solider"...

Note the image landed on didn't include the lower vest. DALL-E 2 "outpainting" to the rescue again.

Finally, Anya Taylor-Joy as "the soldier's wife"...

(Midjourney was trying to add text to these, thus, the unintelligible words.)

After generating the assets, used Photoshop's built-in "Select Subject" (also AI) to cut out the characters and composite them onto the background.

Add some movie poster typography and done.

It all took about 3 hours and cost less than $10.

Honestly found this whole process to be both amazing and a bit troubling.

Amazing because it felt like being an art director of a studio that could produce quality results practically in real-time and basically for free.

Troubling for the same reason.

There's great power but little responsibility in the current iteration of these tools particularly around style attribution, credit, and likeness.

The next few years will be interesting and challenging for artists, to put it mildly.