A lesson some people learn too late:

Building a machine learning system is not like building regular software.

But unless you've done it before, you'd think it's all pretty much the same.

Here is a reason they are different and what you can do about it:

For the most part, regular software looks like this:

• Build once

• Run forever*

I know there's no such thing as *forever*. Things change all the time.

But this rate of change pales in comparison with machine learning systems. Here is an example:

A real, straightforward example:

You build a deep learning model to process pictures that users capture with their phones.

The goal of the model: recognize different shoe brands in the pictures.

Everything works, but something happens:

Apple releases a new iPhone.

A beautiful, groundbreaking new camera that is now packing pixels differently.

The pictures look stunning, but the model starts having problems.

Guess what happened?

We trained our model on different pictures.

The world changed, but our model didn't.

Although the new pictures aren't enough reason to break our model, there're enough differences to reduce its performance.

Unfortunately, there's more:

Nike just released a new pair of shoes. Adidas did the same.

Both go viral. Everyone is buying them!

When we trained our model, these shoes didn't exist. We didn't know about them, so our model doesn't either.

The model's performance takes another hit.

It's been a few weeks. We had an excellent system that turned into a mediocre one.

The model is the same. The world isn't.

In machine learning, we call this problem "drift."

Specifically, I gave you examples of data and concept drift.

It's natural for those who haven't built complete systems before to assume their work is done as soon as they finish training their model.

In reality, that's when the work truly starts.

Building a model is simple. Building a model that works is another ball game.

Interestingly, most people know how to tackle drift.

But they are missing a critical step: They realize that drift happens when it's too late.

When everything breaks, they scramble and fix things.

You need to do better. You need good *monitoring*.

Monitoring helps you see problems coming.

More often than not, it gives you time to react before your system breaks.

I've tried to implement a monitoring system myself.

I'd rather eat glass, so I have a recommendation for you:

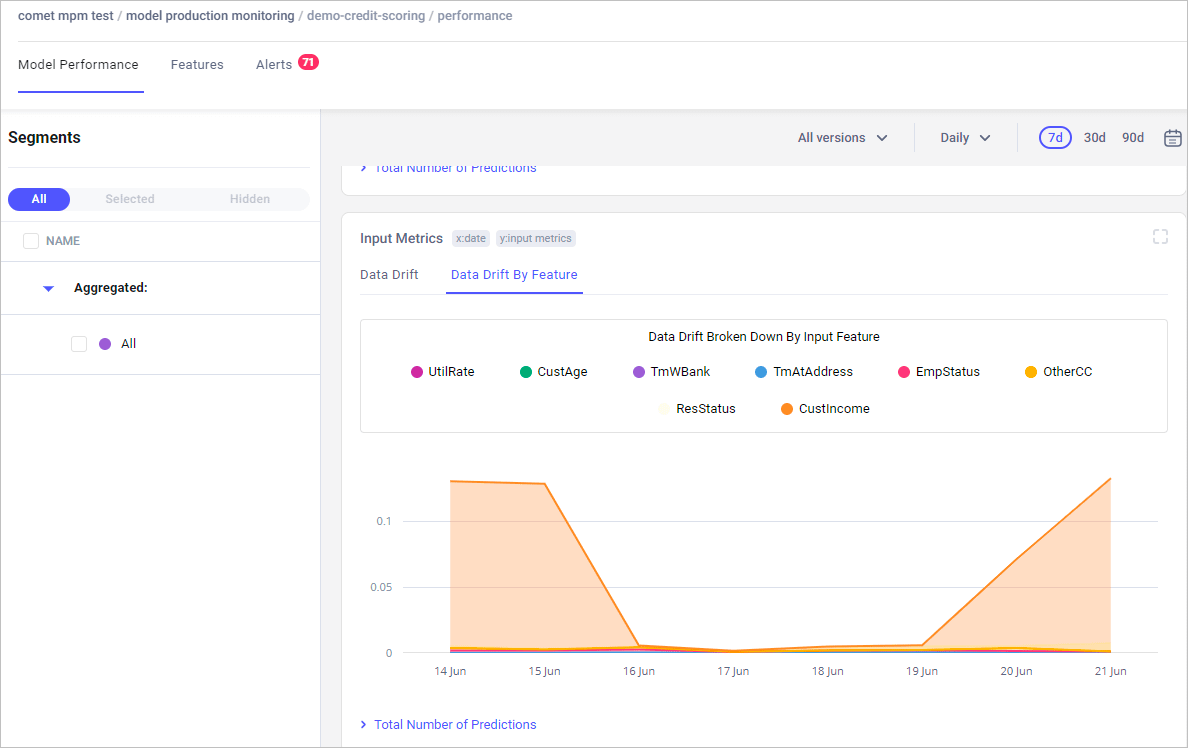

Look into @cometml's Model Production Monitoring:

comet.com/docs/v2/guides/model-production-monitoring/mpm-overview/?utm_source=svpino&utm_medium=referral&utm_campaign=online_partner_svpino_2022

You have access to 3 fundamental levers:

1. Identify if your model is struggling

2. Track drift across inputs and outputs

3. Get alerts if anything is wrong

@Cometml gives you what you need to answer two questions:

1. Am I going to have a problem with my model?

2. Do I already have a problem with my model?

Not having a robust monitoring system is like driving a car without a gas gauge.

For those who prefer to build everything in-house:

A poorly designed monitoring system is worse than no system at all.

A broken alarm at home that doesn't go off when the thieves come in will make you think everything is fine.

Until it's too late.

Every week, I break down machine learning concepts to give you ideas on applying them in real-life situations.

Follow me @svpino to ensure you don't miss what's coming next.