I've helped hundreds of people start with machine learning.

Everyone asks me the same, fundamental question.

But they all hate my answer. Engineers even more.

Let me try again, but this time I'll show you a few lines of code that will 10x your process:

People always ask: "How do you know what to do now?"

The answer is simple, but nobody likes to hear it: "Well, you don't know."

After a few seconds, I follow up: "You need to experiment to find what works best."

Here is the reality they don't want to hear about:

Machine learning models are hard to optimize for any given problem.

There are just too many variables that we could change!

People aren't used to this. It's hard for them to move from "I know what will happen" to "I need to try and see."

Here is one example:

Let's talk about a concrete idea: "hyperparameters."

Think of these as "configuration settings." Depending on the values you choose, your model will perform differently.

These are the knobs and levers of every machine learning model.

Every model has different hyperparameters.

Here are a few common examples:

• learning rate

• batch size

• epochs

• regularization

The list goes on and on.

In a perfect world, we will find the combination of values for these hyperparameters that's ideal for our problem.

But finding these values is a headache. There are too many possibilities!

We need something better.

I consider myself an organized person.

When I started building models, I kept a spreadsheet with different combinations of values.

Whenever I tried a set of hyperparameters, I carefully took notes of values and results.

There are two problems with this.

First problem:

You don't want to run your experiments manually. There's little chance you'll find an optimal combination by guessing.

Second problem:

Writing down values in a spreadsheet is cumbersome, suboptimal, and prone to errors.

To solve the "finding hyperparameters by hand" part, these are the libraries I use:

1. KerasTuner

2. Optuna

With these, you can automatically find the best hyperparameters for your model.

For those who like code, here are two examples:

The first example uses KerasTuner to train a neural network on the Penguins dataset: deepnote.com/@svpino/Keras-Tuner-z31YNDzcSQKAqJGZSqTDPQ

The second example uses Optuna to optimize an XGBRegressor and a CatBoostRegressor:

deepnote.com/@svpino/Tuning-Hyperparameters-with-Optuna-6hoSPY0vTiCPIpXwdHDVVw

The first problem was running multiple experiments to find the best set of hyperparameters.

But how do you keep track of all of these experiments?

You can't rely on spreadsheets, at least not if you want to keep your sanity.

Here is where @Cometml enters the picture.

With a few lines of code, you can synchronize your work and keep track of everything you do.

Here is the same example code as before, but this time using @Cometml:

deepnote.com/@svpino/Keras-Tuner-CometML-f60a5301-1ad2-4b97-babc-7c7e12f1b45f

A couple of notes:

Notice that my code stayed the same. I just added a couple of lines to connect to @Cometml.

I get all sorts of metrics and charts right from @Cometml's interface. All of them for free!

Over time, you'll have a library of experiments with detailed metrics about everything you did.

You can compare experiments, reproduce them, filter, or go back to any one of them.

You won't ever go back to manual tracking. I promise.

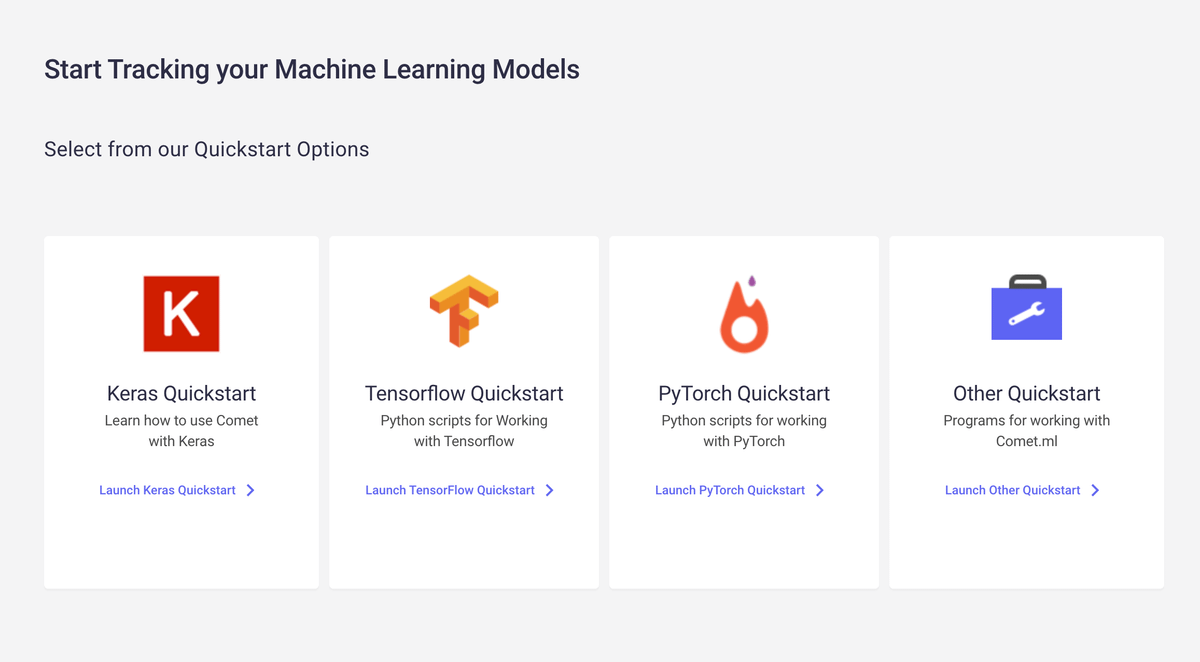

Here is the link I want you to bookmark:

comet.com/docs/?utm_source=svpino&utm_medium=social&utm_campaign=online_paii_2022

Find some sample code, and click on the corresponding Quickstart guide on this page.

It's a long list of supported libraries, so you'll find something that works for you.

Every week, I post 1 or 2 threads like this, breaking down machine learning concepts and giving you ideas on applying them in real-life situations.

Follow me @svpino and make sure you don't miss my next thread.