GLM-4.6V (from @Zai_org) is the real deal. It also sounds like Sonnet.

It punches pretty close to Sonnet 4 on coding tasks & visual understanding. This is the first OSS vision model that can really critique designs at a useful enough level.

It's only been a few days since we were talking about Opus 4.5 closing the design loop, and it's crazy to see that open-source is catching up.

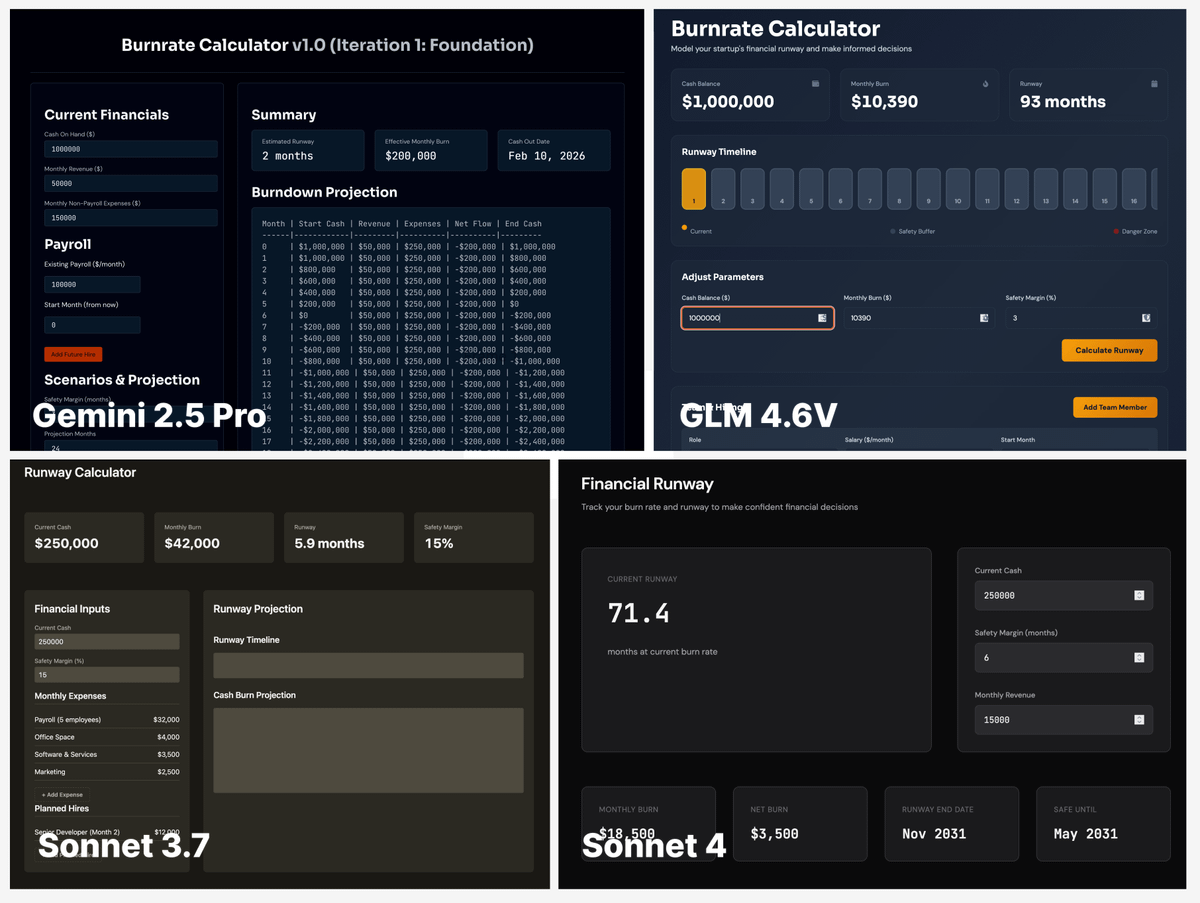

The main tests were on our internal design skill (which is a 10-page doc going from the basics of design all the way to an iterative improvement loop), and GLM is the first open-source model that can actually iterate with the doc. Simple task - make a burnrate calculator: lots of areas to polish, lots of state to change.

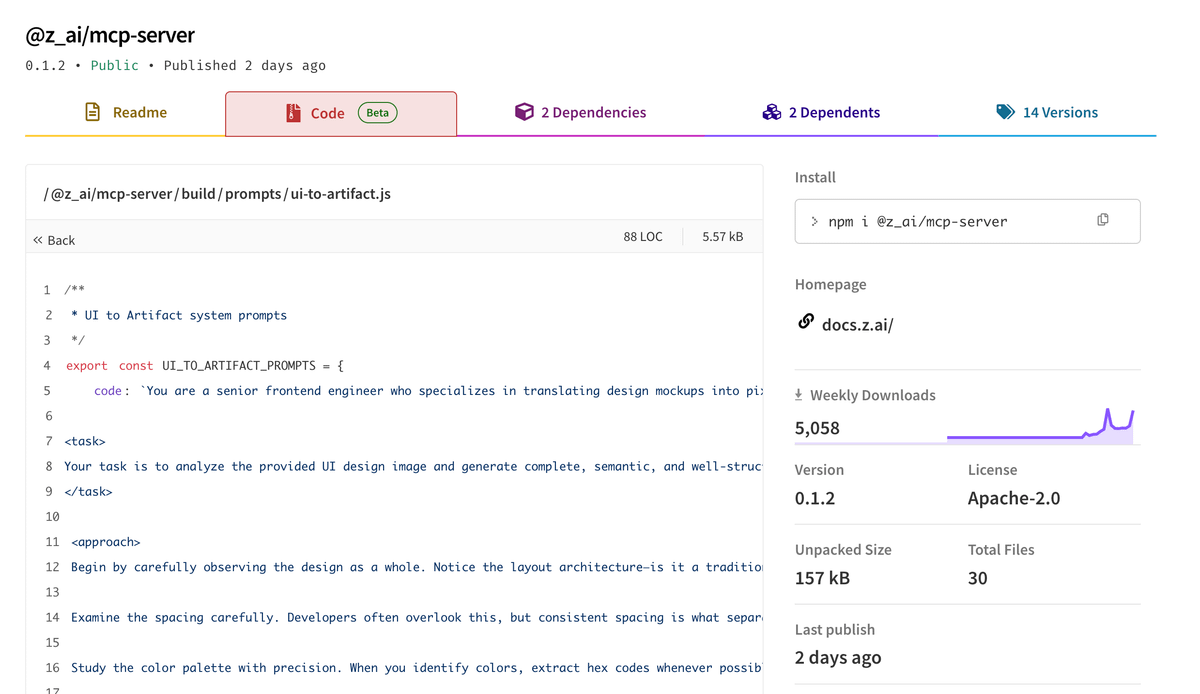

The best place to test it today is chat.z.ai - I don't think the Claude Code endpoint or the AI SDK playground integrate vision capabilities properly. ZAI's vision MCP server seems recommended if you want to get those right.

haven't tested the MCP server, but the included prompts are decent at getting results.

What's strange is how much it sounds like Sonnet. One of my vibe tests is to pass it this old rant from six years ago (olickel.com/quality-to-an-artificial-intelligence) which almost always prompts models into soliloquies.

Gemini, Sonnet, GPT all have unique takes. GLM's sounds so much like Sonnet.

It's not perfect tho. Some post-training might still be needed - I did see a few loops (repeating the same text over and over again) in my testing.

Overall this is a SOLID model - especially priced cheaper than gemini-2.5-flash, a model it beats hands down. What a time to be alive.

Anyway here's the Opus journey

x.com/hrishioa/status/1994112235178697040?s=20