I had the chance to go to Vancouver for @NeurIPSConf, one of the biggest conference dedicated to Machine Learning and #AI.

It's was a big week, and in this thread I'll summarize what I learned there :-)

Sunday is all about the very long lines to register.

There was 13k attendees this year!

twitter.com/Albertorizzoli/status/1203727250753015808

It’s also the day of the expo industry.

All big players were here. Only @nvidia was missing.

It was fun to see @ArthurIMiller at the @mitpress booth.

mitpress.mit.edu/books/artist-machine

On Monday @katjahofmann gave a great overview of Reinforcement Learning: “RL, past, present and future”.

I recomend to watch it, if you are interested by the theoretical basis of RL:

slideslive.com/38921493/reinforcement-learning-past-present-and-future-perspectives

The talk from @celestekidd was the best invited talk IMO.

Because of the quality of her research (about how humans form beliefs), and also because of her #metoo statement.

Everyone in the room was impressed & inspired.

#StrongWomen #Courage

twitter.com/celestekidd/status/1204296384582602752

The poster session organized by @WiMLworkshop & @black_in_ai was amazing.

Like last year it helps to fight stereotypes just by showing that you can fill a *HUGE* space with excellent research done by women and black people.

twitter.com/WiMLworkshop/status/1204196628544012289

It’s sad to see that not everyone was able to attend because of where they born:

twitter.com/SekouLRemy/status/1204166825862402048

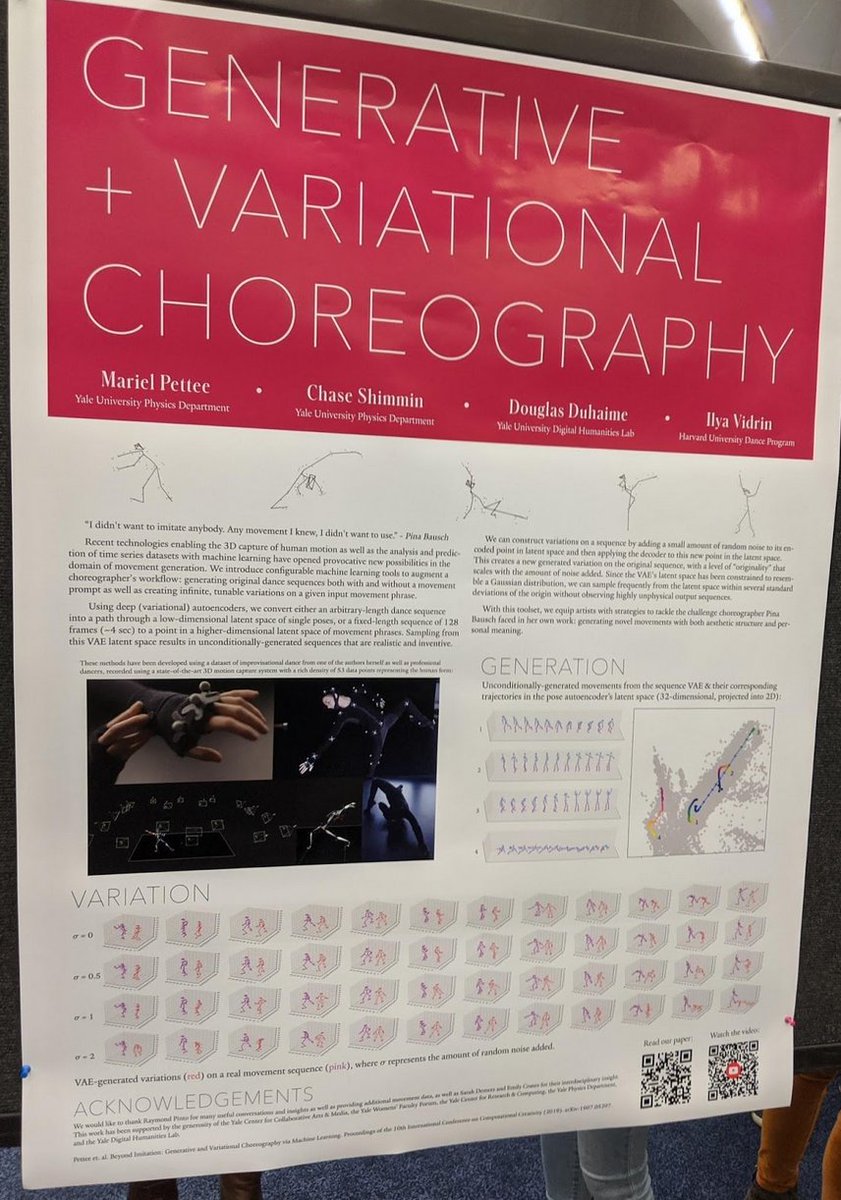

I liked the poster from @marielpettee about modeling dance. It's always amazing to talk directly to the person who made the research.

Also enjoyed a lot this tech to transform a rough cut and paste into a photo-realistic composition (by using a GAN).

Tuesday, another great poster session:

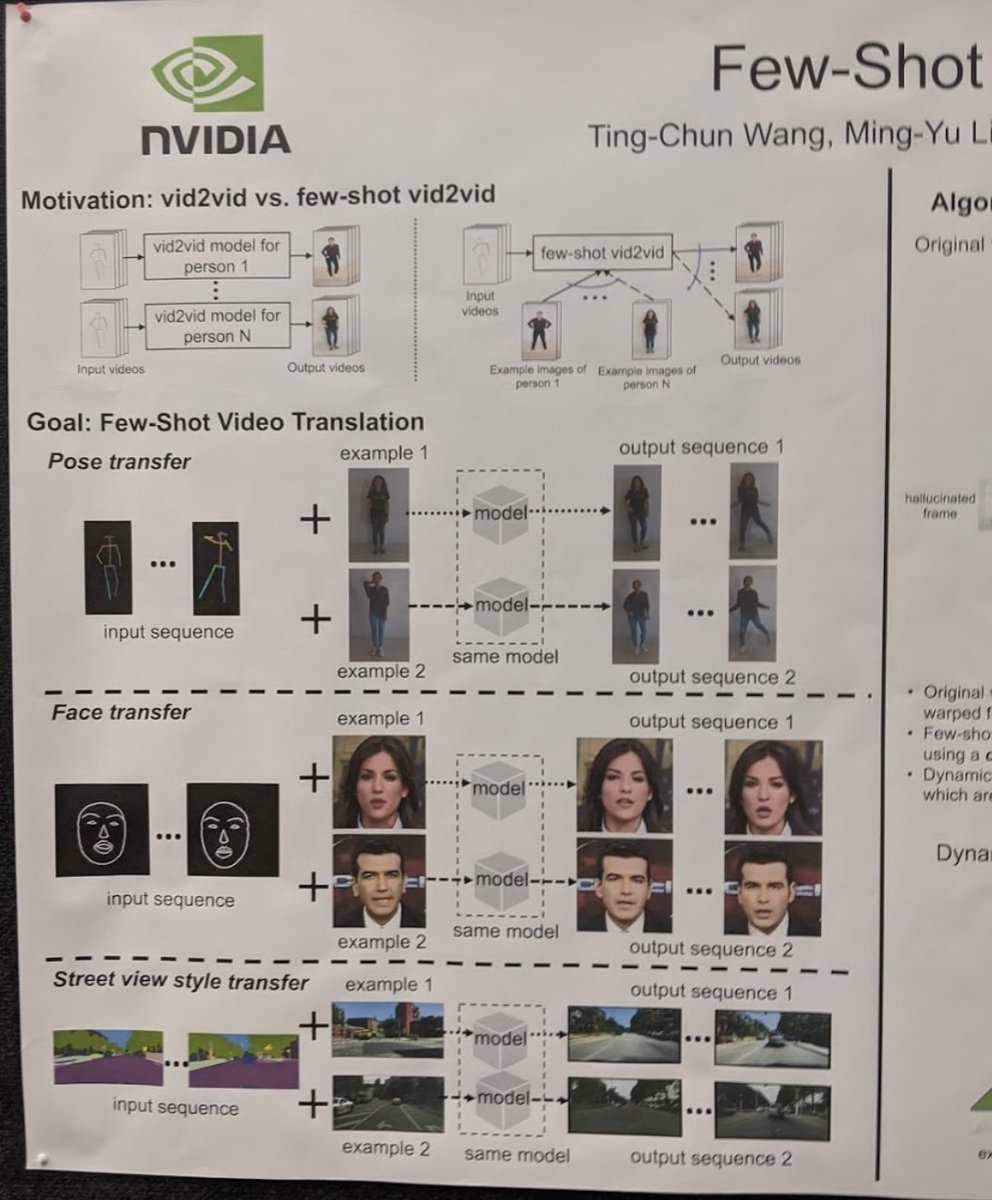

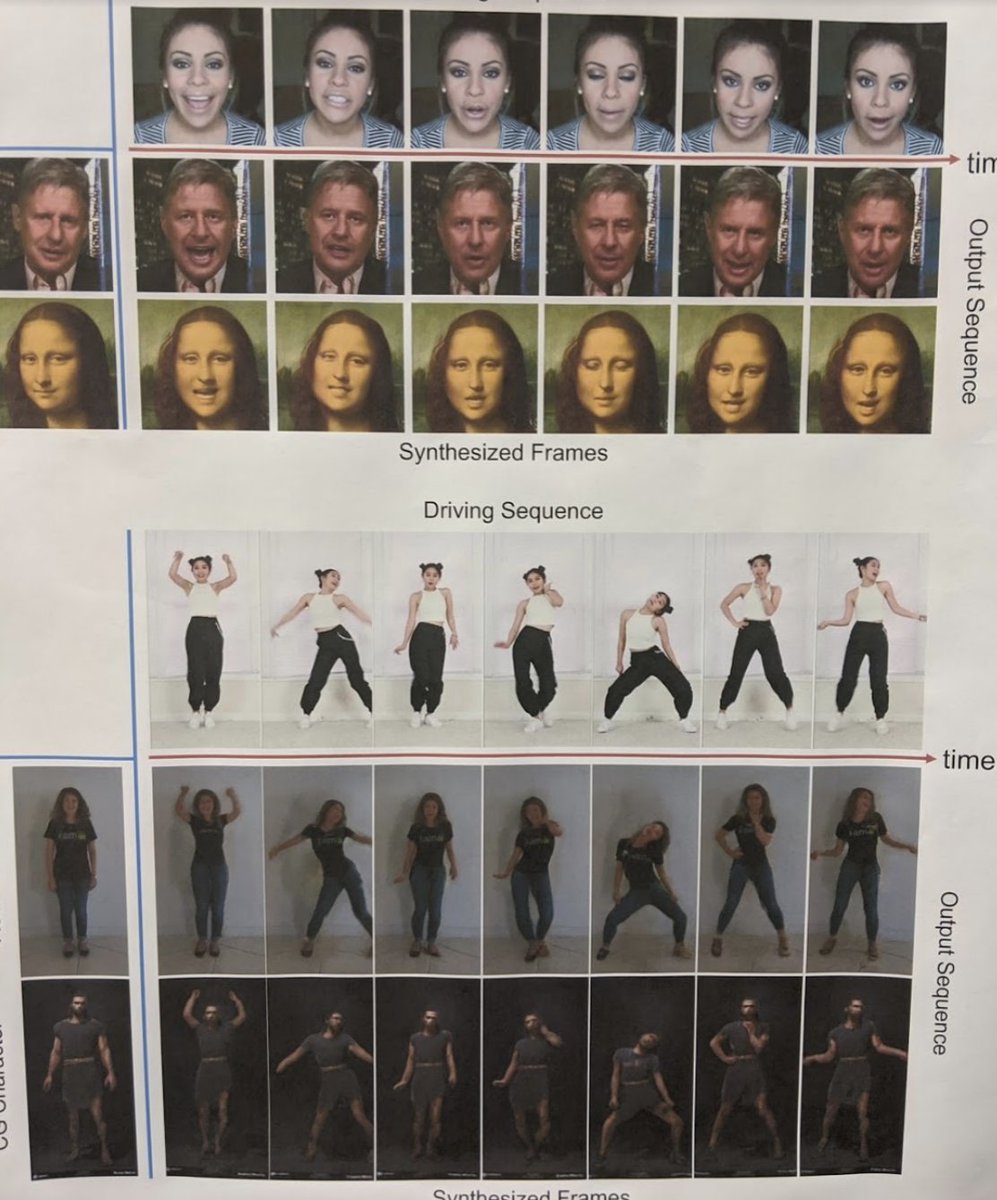

@NvidiaAI Nvidia presented their Few Shot video translation, following their vid2vid from last year.

youtube.com/watch?v=8AZBuyEuDqc

And also dancing to music:

youtube.com/watch?v=-e9USqfwZ4A

See more here:

news.developer.nvidia.com/nvidia-dance-to-music-neurips/

Wednesday @blaiseaguera opened the day with an amazing conf based on the idea that ML today can help when there is no loss function to optimize.

You can watch it here: slideslive.com/38921748/social-intelligence

From @blaiseaguera talk:

“Every exponential is always the first part of a sigmoid”

👍👍👍👍👍👍👍👍👍👍👍👍👍👍👍👍👍👍

Take that in your face Singularity. 🤣

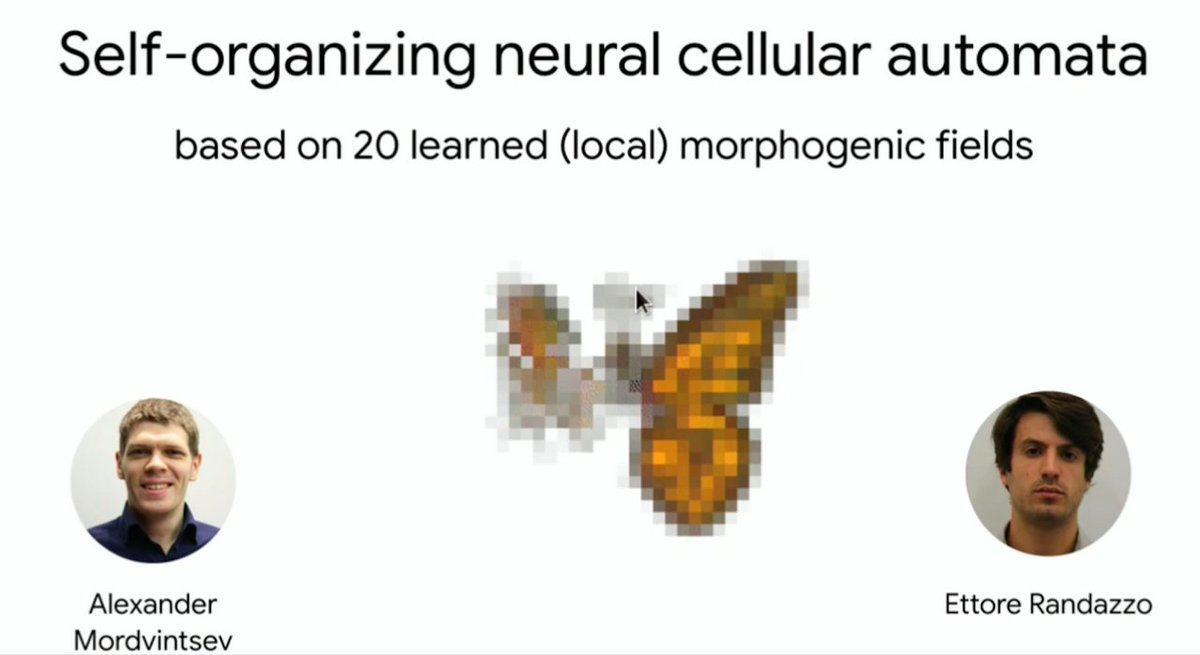

He revealed a *VERY* promising work by @zzznah & @RandazzoEttore.

Think of game of life where the rule are neural based & learned.

Then imagine that you draw a digit, and each cell color itself to predict witch digit it is. It converge so at the end the digit has the right color

Wednesday is also the demo day.

The Artificial Hear from @idivinci won the “Best demo award”

youtube.com/watch?v=aBkHO6oV-Mc

It is a cool #dataviz of a neural network witch analyses music that is also able to synthesize audio.

More info here:

vincentherrmann.github.io/demos/immersions

Another demo from DiPaola & Abukhodair from ivizlab.sfu.ca

They created a virtual artist that draws portrait after interview you.

More info there: ivizlab.org/research/ai_empathetic_pianter/

This is the painting it did of me:

Wednesday was also the day of Yoshua Benjo's talk.

The last slide is unfortunately a summary of the talk:

Bold affirmation,

Supported by…

nothing

with WTF illustrations and simplistic legends.

It's sad, because this guy has tons of interesting things to tell and teach.

Thursday one more poster session:

@NvidiaAI unveiled #StyleGAN2!

The best image generator so far.

github.com/NVlabs/stylegan2

It don’t take long until @quasimondo start exploring it:

twitter.com/quasimondo/status/1208774497077252097

Also good poster about Paraphrase generation:

More info here:

github.com/FranxYao/dgm_latent_bow

And this poster was mind bending.

What if you have a webcam that record only an object that cast shadows on a wall?

What if you want to reconstruct what is in front of the object, opposite to the camera?

What if you don’t have any pairs to do the training?

Also, I got the explanation about the “Worse is better” design principle from Zachary DeVito.

It’s another way to say “The better is the enemy of the good” witch make total sense in computer (over)engineering.

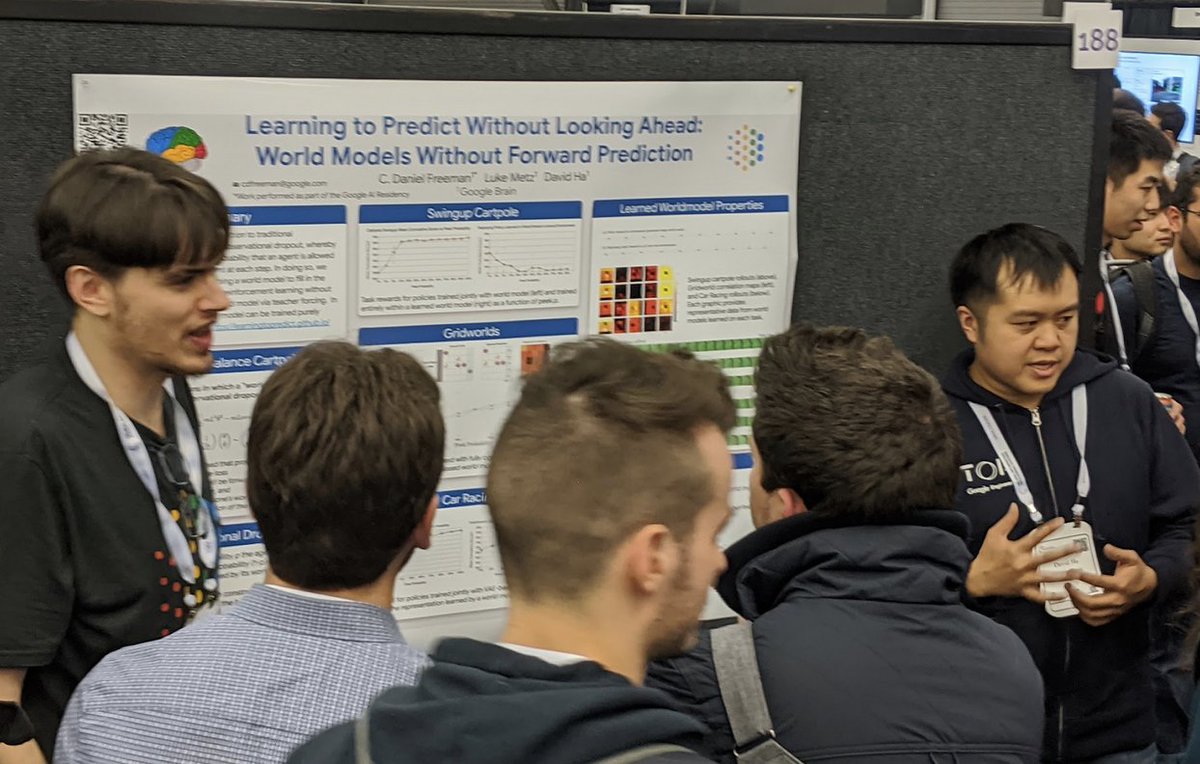

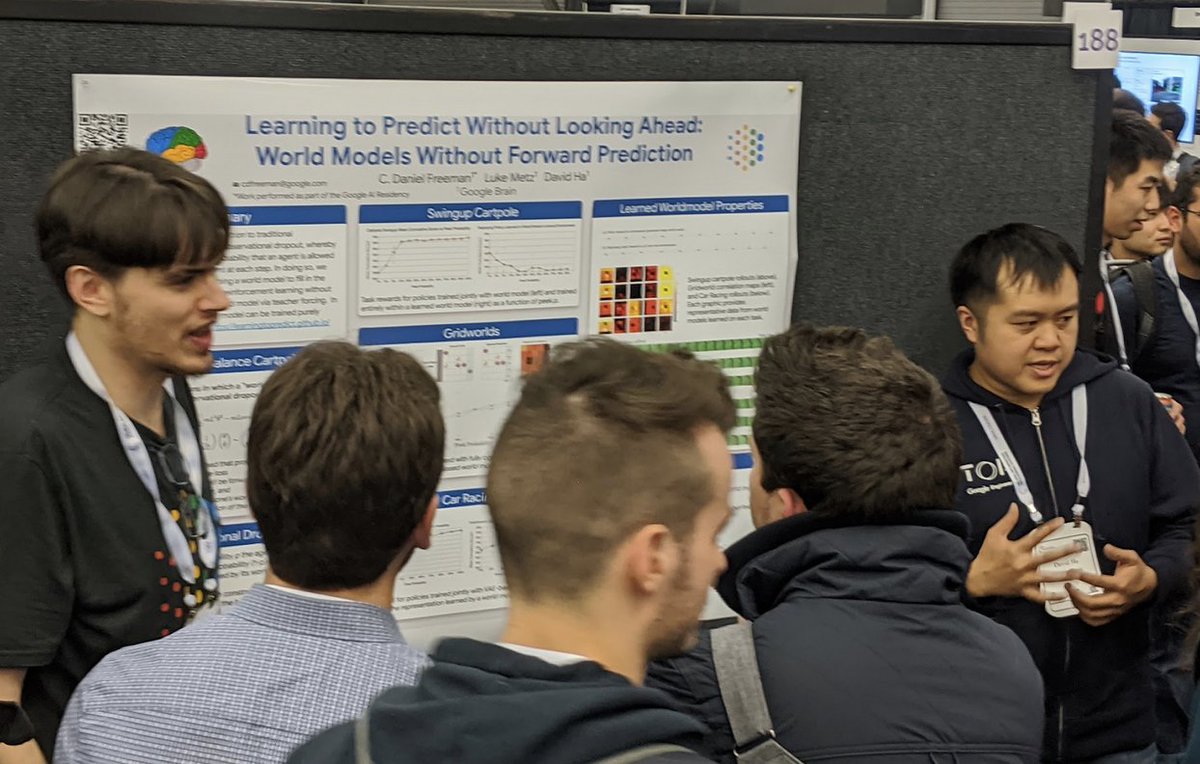

Friday, super buzy poster from @hardmaru, as always :-)

He shows that a predictive model can emerge if an agent can’t observe all time step, so have to guess the state from time to time. Optimization is only done based on the reward, with no direct comparison to the actual state

Saturday was the highlight of the week for everyone interested in AI and creativity.

Organized by:

@elluba, @sedielem, @RebeccaFiebrink, @ada_rob, @jesseengel, @dribnet, @mcleavey, @pkmital, @naotokui_en

Full program here:

neurips2019creativity.github.io/

Everything was great:

About deek fake by @GiorgioPatrini:

1) Pb is not politics, it’s making non consensual video targeting women.

2) Even if a video is not fake, someone can claim it is, there is no proof anymore

3) Analysing sensor footprint vs GAN footprint can work to detect fake

4)But ultimately you can create a GAN to simulate sensor footprint, so we are doomed.

You can earn 1M$ if you find a way to detect fake

More here: ai.facebook.com/blog/deepfake-detection-challenge/

It was intriguing to see images generated by @Maja_Petric_LA witch looks like collage of image related to climate change.

More info here:

bbc.com/future/gallery/20190117-how-ai-can-help-us-understand-climate-change

The art from @JoanneHastieArt was interesting too.

twitter.com/JoanneHastieArt/status/1202233905778241536

@notwaldorf presented MidiMe, a cute sequencer based on @GoogleMagenta VAE

You can play with it here: midi-me.glitch.me/

All the posters were great, you can find the full list here:

neurips2019creativity.github.io/

Claire @YACHT explained how they use @GoogleMagenta to made their new album “Chain Tripping”.

One song SCATTERHEAD is on YT:

youtube.com/watch?v=lvFZQn69oxQ

The algorithm was trained on all their previous music and generated tons of melody.

They decided they could not add notes, add harmonies, Jam or improvise.

But they could choose the instruments, transpose melodies or cut them.

And they curated their Lyrics out of text generated by @rossgoodwin

The talk from @sougwen get a lot of attention too. You can learn more about her work by looking at her ted talks: ted.com/talks/sougwen_chung_why_i_draw_with_robots

My best moment was when (two!) @ylecun presented the poster session.

slideslive.com/38922087/neurips-workshop-on-machine-learning-for-creativity-and-design-30-2

The first time I was 😂 in a ML conf.

Thanks @improbotics

#feelthelearn

There was also an online and onsite exhibit:

twitter.com/elluba/status/1207376577069277190

And like last year @dribnet was selling amazing artwork.

You can buy some of them there here too: dribnet.bigcartel.com/

The full creativity workshop was recorded, here all the links:

slideslive.com/38922086/neurips-workshop-on-machine-learning-for-creativity-and-design-30-1

slideslive.com/38922087/neurips-workshop-on-machine-learning-for-creativity-and-design-30-2

slideslive.com/38922088/neurips-workshop-on-machine-learning-for-creativity-and-design-30-3

slideslive.com/38922089/neurips-workshop-on-machine-learning-for-creativity-and-design-30-4

Time to conclude, here my main takeaway:

1) End to end learning lost it’s magical aura.

2) Adding prior to a network is the now cool, by selecting the right architecture.

3) Concept of regrets (how much an agent could have done better) is trending.

But probably the biggest takeaway:

NLP is having the same revolution than Computer vision had in 2010.

medium.com/@thresholdvc/neurips-2019-entering-the-golden-age-of-nlp-c8f8e4116f9d

Overall, same conclusion than last year:

The industry will need at least a decade to take advantage of all the research done so far.

And of course, the main thing at @NeurIPSConf is all the people you can meet there.

I can’t name them all here, but that’s really the reason why you want to attend in person.

That's all folks!

And If you want to read my last year thread, it's there:

twitter.com/dh7net/status/1071947223020331010?s=20

@NeurIPSConf @threadreaderapp unroll